|

11/20/2023 0 Comments Conditional entropy

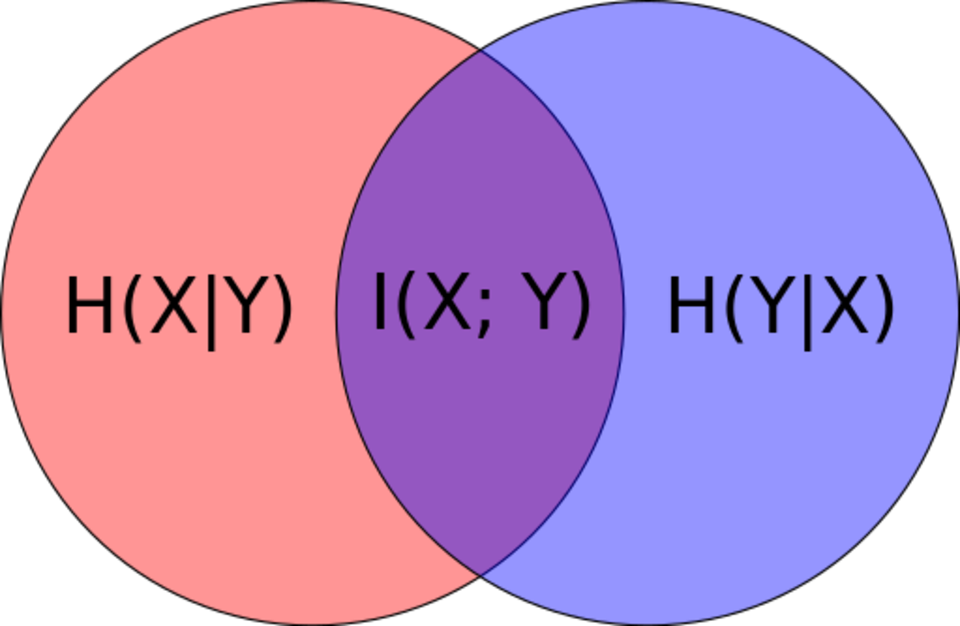

The conditional information between x i and y j is defined as I ( x i y j) log 1 P ( x i y j) They give an example for mutual information in the book. Conditional Entropy Definition of Conditional Entropy: H(YX) The average conditional entropy of Y S jProb(Xv j) H(Y X v j) Example: CS 0.25 0 History 0.25 0 Math 0.5 1 v j Prob(Xv j) H(Y X v j) Math Yes H(YX) 0.5 1 + 0.25 0 + 0.25 0 0. LThejoint entropyof (jointly distributed) rvs Xand Ywith ( )p, is H(X Y)Q. x(x)logp(x) (where the summation is over the domain of X). X( ) LShorter notation: for X p, let H( ). If you find in this approach any inaccuracies or a better explanation I'll be happy to read about it. The Mutual Information I ( x i y j) between x i and y j is defined as I ( x i y j) log P ( x i y j) P ( x i). L For xSupp(X), the random variable YSX is well dened. LRecall that the entropy of rv X over X, is dened by H(X)Q. Definition The conditional entropy of X given Y. Causal entropy (Kramer, 1998 Permuter et al., 2008), H(ATjjST), E A S logP(A TjjST) (3) XT t1 H(A tjS 1:t A 1:t 1) measures the uncertainty present in the causally con-ditioned. The joint entropy measures how much uncertainty there is in the two random variables X and Y taken together. I'm trying to understand the relationship between maximum likelihood estimation for a function of the type $p(y^(y|x)$), therefore the conditional cross-entropy is not a random variable, but a number. The subtle, but signi cant di erence from conditional probability, P(AjS) Q T t1 P(A tjS 1:T A 1:t 1), serves as the underlying basis for our approach. In information theory, the conditional entropy (or equivocation) quantifies the remaining entropy (i.e.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed